As generative AI becomes integrated into scholarly workflows, prompt design is emerging as an important research skill. The Task–Instruction–Context (TIC) framework provides a simple but powerful structure for constructing effective prompts.

Generative AI tools are increasingly used by academic authors to support activities such as outlining articles, drafting explanations, synthesising literature, or refining language. However, the quality of the output depends strongly on the structure of the prompt supplied to the system. Vague prompts tend to produce generic responses, whereas well-structured prompts can yield outputs that are far more relevant, precise, and academically appropriate.

One practical way to improve prompt quality is to use a Task–Instruction–Context (TIC) structure. Within the context component, authors can further specify key parameters using the acronym AVDSJL: Audience, Voice, Domain, Sector, Jurisdiction, and Language. This approach helps constrain the model’s response and align it with academic expectations.

This article explains the TIC structure and demonstrates how academic authors can apply it in research and scholarly writing.

Large language models generate responses probabilistically based on the text they receive as input. The prompt therefore acts as a specification of the task environment. In academic contexts—where precision, disciplinary conventions, and audience expectations matter—explicit prompting is especially important.

Research on prompt engineering has shown that clear instructions and contextual cues significantly improve model performance, particularly for complex tasks such as summarisation, reasoning, and domain-specific writing (Brown et al., 2020; Wei et al., 2022). Providing structured prompts also reduces ambiguity and improves reproducibility when AI tools are used as part of scholarly workflows.

For academic authors, prompt structure serves three main purposes:

The TIC framework operationalises these goals.

The TIC structure divides a prompt into three logical components.

A basic prompt structure might therefore look like this:

Prompt Template

Task: [What you want the AI to produce]

Instruction: [Specific requirements for how the task should be performed]

Context includes:

Audience:

Voice:

Domain:

Sector:

Jurisdiction:

Language:

Each component plays a distinct role in guiding the model’s response.

The Task specifies the primary activity the AI should perform. In academic writing, typical tasks include:

The task should be expressed as a clear action verb. Vague task statements (e.g., “help with my paper”) leave the model to infer intent and typically produce weaker outputs.

Example:

Task:

Write an explanation of the concept of knowledge management.

The Instruction component defines constraints, format requirements, or methodological expectations.

Examples include:

Example:

Instruction:

Define the concept using the genus–differentia form commonly used in terminology science and include at least two academic citations (Harvard); limit to 200 words.

By separating the Task from the Instruction, the prompt becomes clearer and easier to refine.

The Context component situates the output within a specific communicative environment. Academic writing varies significantly depending on discipline, audience, and jurisdictional conventions.

The AVDSJL mnemonic helps ensure that key contextual parameters are specified (see image below).

.png)

Identifying the audience determines the level of explanation and assumed prior knowledge for your target reader.

Examples:

Voice specifies stylistic expectations.

Examples:

The domain situates the response within a specific knowledge discipline.

Examples:

The sector indicates the applied context.

Examples:

Jurisdiction matters for topics such as law, regulation, or policy.

Examples:

Specifying language is particularly useful in multilingual research contexts.

Example:

Below is an example of the TIC–AVDSJL approach applied to a typical academic writing task.

Prompt Example

Task:

Write a concise definition of artificial intelligence.

Instruction:

Use the genus–differentia definition structure and include one citation to an international standard.

Context:

Audience: Engineering students

Voice: Academic and instructional

Domain: Information technology

Sector: Information media and telecommunications

Jurisdiction: International standards environment

Language: English

This structured prompt communicates six contextual constraints and two task directives, significantly increasing the likelihood of a relevant response.

Using the TIC–AVDSJL structure offers several advantages for researchers and scholarly writers.

Explicit parameters reduce ambiguity and improve alignment with disciplinary expectations.

Researchers can store prompt templates in a personal prompt library for recurring tasks such as:

Structured prompts support methodological transparency, particularly when AI is used as part of research workflows.

Providing contextual signals (discipline, audience, sector) improves the semantic relevance of generated text.

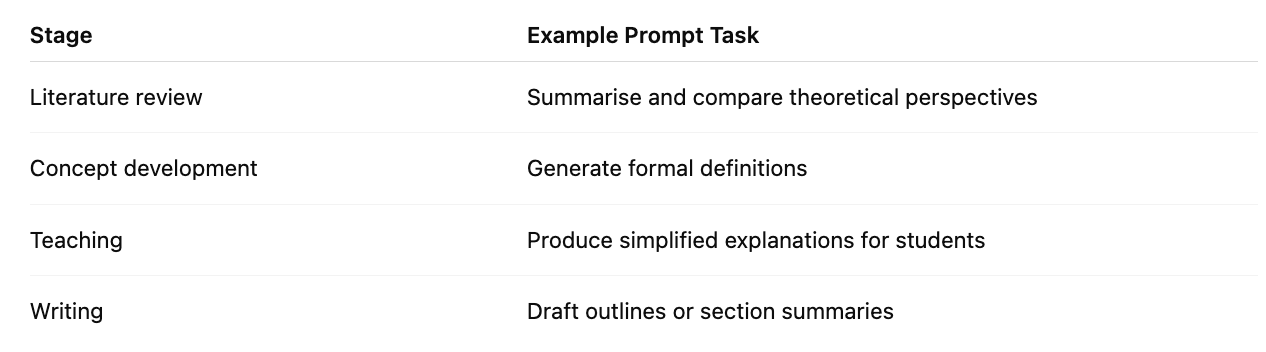

Academic authors can incorporate the TIC framework into several stages of research writing:

Maintaining a library of structured prompts can further streamline these processes.

As generative AI becomes integrated into scholarly workflows, prompt design is emerging as an important research skill. The Task–Instruction–Context (TIC) framework provides a simple but powerful structure for constructing effective prompts.

By combining TIC with the AVDSJL contextual model, academic authors can specify the communicative environment in which the output should operate. The result is more precise, discipline-appropriate, and useful AI-generated content.

For researchers seeking to incorporate AI tools responsibly into academic work, structured prompting represents an essential methodological practice.

Bommasani, R. et al. (2021) On the opportunities and risks of foundation models. Stanford Center for Research on Foundation Models. Available at: https://arxiv.org/abs/2108.07258

Brown, T. et al. (2020) ‘Language models are few-shot learners’, Advances in Neural Information Processing Systems, 33, pp. 1877–1901.

OpenAI (2024) Prompt engineering best practices. Available at: https://platform.openai.com/docs/guides/prompt-engineering

Reynolds, L. and McDonell, K. (2021) ‘Prompt programming for large language models: Beyond the few-shot paradigm’, Proceedings of the CHI Conference on Human Factors in Computing Systems Extended Abstracts.

Wei, J. et al. (2022) ‘Chain-of-thought prompting elicits reasoning in large language models’, Advances in Neural Information Processing Systems, 35.

ISO (2019) ISO 704: Terminology work — Principles and methods. Geneva: International Organization for Standardization.