AI can generate literature reviews that look academically credible and align structurally with the core functions of scholarly synthesis. However, credibility in academic work is not defined by structure alone—it depends on depth, rigour, and verifiability.

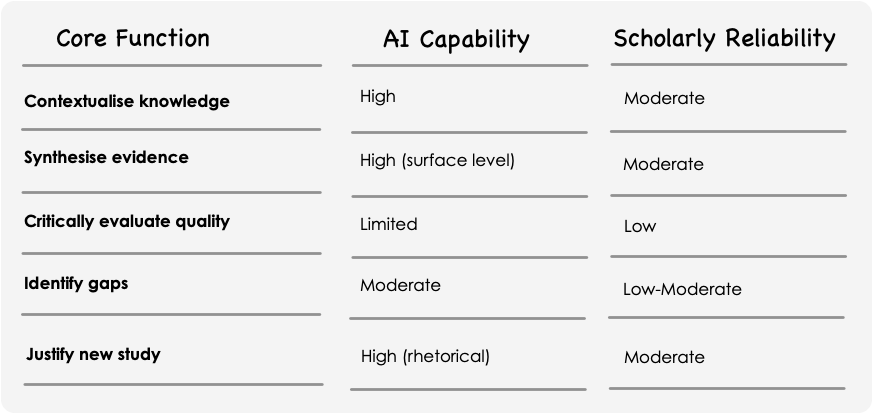

Artificial intelligence is increasingly embedded in academic workflows, particularly in the production of literature reviews. Tools powered by large language models promise rapid synthesis, coherent structure, and broad coverage. Yet a literature review is not simply a summary—it performs a set of core scholarly functions: contextualising knowledge, synthesising evidence, critically evaluating quality, identifying gaps, and justifying new research.

This article examines how AI performs each of these functions in practice, and where its capabilities align—or fall short—of academic expectations.

At a technical level, AI-generated literature reviews combine three processes:

This pipeline allows AI to approximate the structure of academic writing—but approximation is not equivalence. The distinction becomes clear when evaluated against the core functions of a literature review.

What the literature review requires

A robust review situates a topic within its intellectual lineage—key theories, debates, and turning points.

How AI performs

AI retrieves relevant sources and generates summaries that position a topic within a broader field. It can efficiently outline dominant theories and recurring concepts.

Where it works well

Where it falls short

Verdict: Strong for orientation; weaker for nuanced intellectual positioning

What the literature review requires

Synthesis involves integrating findings across studies, identifying patterns, contradictions, and relationships.

How AI performs

AI groups similar findings using semantic similarity and generates thematic summaries across sources.

Where it works well

Where it falls short

Verdict: Efficient but often superficial

What the literature review requires

Scholarly reviews assess methodological rigour, bias, validity, and reliability of included studies.

How AI performs

AI can mimic evaluation frameworks and generate structured critiques when prompted.

Where it works well

Where it falls short

Verdict: Structurally competent, substantively limited

What the literature review requires

A key contribution of a review is identifying what is not yet known—genuine gaps in the evidence base.

How AI performs

AI infers gaps by detecting underrepresented themes or inconsistencies across retrieved material.

Where it works well

Where it falls short

Verdict: Useful for brainstorming, not validation

What the literature review requires

A compelling argument that a proposed study is necessary, original, and valuable.

How AI performs

AI assembles conventional academic arguments using learned rhetorical patterns (e.g., “Despite extensive research on X, limited attention has been given to Y…”).

Where it works well

Where it falls short

Verdict: Persuasive in form, variable in substance

A defining feature of rigorous literature reviews—especially systematic reviews—is transparency and reproducibility. Frameworks such as PRISMA require:

AI-generated reviews typically do not provide this audit trail, making it difficult to assess completeness or bias.

Across all five functions, several constraints persist:

These are not minor issues—they directly affect the validity of a literature review.

AI is best understood as a cognitive augmentation tool, not a substitute for scholarly expertise.

But it does not replace the need for:

AI can generate literature reviews that look academically credible and align structurally with the core functions of scholarly synthesis. However, credibility in academic work is not defined by structure alone—it depends on depth, rigour, and verifiability.

Until AI systems can transparently access, evaluate, and justify evidence at the level required by frameworks such as PRISMA, the responsibility for producing a defensible literature review remains firmly with the human researcher.

Written by ChatGPT